Anonymity is the tip aim when finding out privateness, and it’s helpful to consider de-anonymization as a recreation.

We think about an adversary with some entry to data, and it tries to guess accurately who amongst a set of candidates was liable for some occasion within the system. To defend towards the adversary successful, we have to maintain it guessing, which might both imply limiting its entry to data or utilizing randomness to extend the quantity of knowledge it must succeed.

Many readers shall be accustomed to the sport of “Guess Who?”. This recreation could possibly be described as a turn-based composition of two situations of the extra basic recreation “twenty questions.” In “twenty questions,” you secretly select a component from a given set, and your opponent tries to guess it accurately by asking you as much as 20 yes-or-no questions. In “Guess Who?” either side take turns enjoying towards one another, and the primary to guess accurately wins. The set of components is fastened in “Guess Who?”, consisting of 24 cartoon characters with varied distinguishing options, comparable to their hair coloration or fashion. Every character has a novel identify that unambiguously identifies them.

The solutions to a yes-or-no query might be represented as a bit — zero or one. Twenty bits can specific, in base 2, any entire quantity within the vary 0 to 1,048,575, which is 2²⁰-1. If a set might be completely ordered, every factor within the set could also be listed by its numbered place within the order, which uniquely identifies it. So, 20 bits can uniquely tackle considered one of simply over 1,000,000 components.

Though 2²⁰ is the utmost variety of components of a set that could possibly be uniquely recognized utilizing simply the solutions to twenty yes-or-no questions, in real-world conditions, 20 solutions will typically include much less data than that. For many units and mixtures of questions, issues will nearly actually not line up completely, and never each query will bisect the candidate components independently of the opposite questions. The solutions to some questions could be biased; some questions’ solutions may correlate with these of different questions.

Suppose that as a substitute of asking one thing like “does your character have glasses?” you at all times ask, “Alphabetically, does your character’s identify seem earlier than [median remaining character’s name]?”. This can be a binary search, which can maximize how informative the reply to every query shall be: At each step, the median identify partitions the set of remaining characters, and the query eliminates one of many two halves. Repeatedly halving the remaining candidates will slender down the search as shortly as yes-or-no solutions make doable; solely a logarithmic variety of steps is required, which is way sooner than, say, a linear scan (i.e., checking one after the other: “Is it Alice? No? How about Bob? …”).

Keep in mind that if you’re enjoying to win, the purpose of the sport is to not get essentially the most data out of your opponent however to be the primary to guess accurately, and it seems that maximizing the data per reply is definitely not the optimal strategy — not less than when the sport is performed truthfully. Equally, when utilizing video games to review privateness, one should assume the adversary is rational in accordance with its preferences; it’s pretty simple to by accident optimize for a subtly incorrect final result, because the adversary is enjoying to win.

Lastly, suppose the gamers are now not assumed to be sincere. It must be obvious that one can cheat with out getting detected; as a substitute of selecting a component of the set firstly after which answering truthfully in response to each query, you possibly can at all times give the reply that would depart the most important variety of remaining candidates. Adaptively chosen solutions can due to this fact reduce the speed at which one’s opponent obtains helpful data to win the sport. On this so-called Byzantine setting, the optimum technique is now not the identical as when gamers are sincere. Right here, an opponent’s greatest response could be to stay with binary search, which limits the benefit of enjoying adaptively.

Adaptive “Guess Who?” is fairly boring, much like how tic-tac-toe ought to at all times finish in a draw in the event you’re paying consideration. To be exact, as we are going to see within the subsequent part, there are 4.58 bits of knowledge to extract out of your maximally adversarial opponent, and the foundations of the sport can be utilized to pressure the opponent to decide to these bits. This implies the primary participant can at all times win after 5 questions. The transcript of solutions in such video games ought to at all times include uniformly random bits, as the rest would give an edge to at least one’s opponent. Sadly, privateness protections utilizing such adaptivity or added randomness are troublesome to construct and perceive, so precise privateness software program is normally considerably tougher to research than these toy examples.

Measuring Anonymity: Shannon Entropy

The information content of a solution in “Guess Who?” — often known as its Shannon entropy — quantifies how stunning it’s to be taught. For instance, in the event you already discovered that your opponent’s character is bald, it gained’t shock you to be taught that they don’t have black hair; this reply comprises no further data. This wasn’t stunning as a result of, earlier than being informed, you can infer that the likelihood of getting black hair was zero.

Suppose that two choices stay from the set of candidates; it’s mainly a coin toss, and both of the 2 choices must be equally possible and, due to this fact, equally stunning. Studying that it’s possibility A tells you it isn’t B — equivalently, studying that it’s not B tells you that it have to be A — so just one yes-or-no query, one bit of knowledge, is required to take away all uncertainty.

This worth might be calculated from the likelihood distribution, which on this binary instance is Bernoulli with p=1/2.

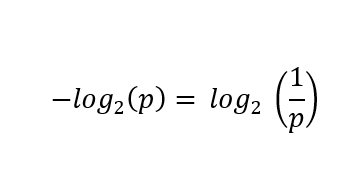

First, compute the negation of the bottom 2 logarithm of the likelihood of every case, or equivalently invert the likelihood first and skip the negation:

First, compute the negation of the bottom 2 logarithm of the likelihood of every case, or equivalently invert the likelihood first and skip the negation:

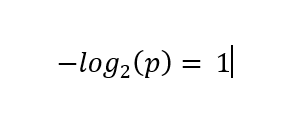

In each instances:

These values are then scaled by multiplying these values by their corresponding chances (as a type of weighted common), leading to a contribution of ½ bits for both case. The sum of those phrases, 1 on this case, is the Shannon entropy of the distribution.

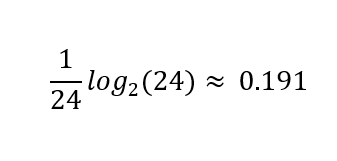

This additionally works with greater than two outcomes. Should you begin the sport by asking, “Is it [a random character’s name]?” you’ll most certainly solely be taught

bits of knowledge if the reply was “no.”

At that time log₂(23) ≈ 4.52 bits quantify your remaining uncertainty over the 23 equally possible remaining potentialities. However, in the event you had been fortunate and guessed accurately, you’ll be taught the complete log₂(24) ≈ 4.58 bits of knowledge, as a result of no uncertainty will stay.

Slightly below 5 bits are wanted to slender all the way down to considered one of 24 characters. Ten bits can determine one in 1,024; 20 bits, round one in 1,000,000.

Shannon entropy is basic sufficient to quantify non-uniform distributions, too. Not all names are equally standard, so an attention-grabbing query is, “How much entropy is in a name“? The linked submit estimates this at roughly 15 bits for U.S. surnames. In line with another paper, first names within the U.S. include roughly 10-11 bits. These estimates indicate an higher certain of 26 bits per full identify, however keep in mind that a standard identify like John Smith will include much less data than an unusual one. (Uniquely addressing the complete U.S. inhabitants requires 29 bits.)

As of writing, the world inhabitants is slowly however certainly approaching 8.5 billion, or 2³³ individuals. Thirty-three is just not a really giant quantity: What number of bits are in a birthdate? Simply an age? Somebody’s city of residence? An IP tackle? A favorite movie? A browser’s canvas implementation? A ZIP code? The phrases of their vocabulary, or the idiosyncrasies of their punctuation?

These are difficult questions. In contrast to these video games and trendy cryptography, the place secrets and techniques are random and preferentially ephemeral, we are able to’t randomize, expire or rotate our real-life figuring out attributes.

Moreover, this personally figuring out data typically leaks each by necessity and typically unnecessarily and unintentionally all through our lives. We regularly must belief individuals with whom we work together to not reveal this data, whether or not by sharing it with third events or by accident leaking it. Maybe it’s not in contrast to how we should belief others with our lives, like docs or skilled drivers and pilots. Nonetheless, actually it isn’t comparable by way of how mandatory it’s to belief as a matter in fact on the subject of our private information.

An Entropist Perspective on Anonymity

Privacy-enhanced systems permit customers to hide in a crowd. For instance, in the event you observe a connection to your server from a Tor exit node, for all you realize, it’s considered one of doubtlessly hundreds of Tor customers that established that connection. Informally, given some occasion {that a} deanonymization adversary has noticed — maybe by intercepting a message being transmitted between two nodes in a community — a selected consumer’s anonymity set refers back to the set of potential customers to whom that occasion could be attributed.

If the receiver of an nameless message is taken to be the adversary, then their greatest guess from a set of candidate senders is the sender’s anonymity set. If this hypothetical system is absolutely nameless, then any consumer is equally more likely to have despatched the message, aside from the receiver.

Two influential papers that proposed to measure anonymity by way of the entropy of the anonymity set had been printed concurrently: “Towards Measuring Anonymity” by Claudia Díaz, Stefaan Seys, Joris Claessens and Bart Preneel, and “Towards an Information Theoretic Metric for Anonymity” by Andrei Serjantov and George Danezis. These works generalize from the idea that the adversary can guess the proper consumer from an anonymity set no higher than probability, to a mannequin that accounts for nonuniform likelihood distributions over this set. Each suggest the quantification of anonymity set sizes by way of bits of entropy.

When the anonymity set is completely symmetric, solely the uniform distribution is smart, so changing the anonymity set measurement to bits is only a matter of computing a log₂(n) the place n is the dimensions of the set. For instance, 1024 equiprobable components in a set have 10 bits of entropy of their distribution.

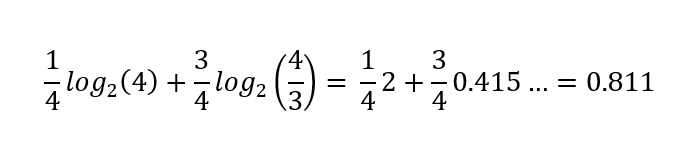

When the distribution is just not uniform, the entropy of the distribution decreases. For instance, if both heads or tails is feasible, however there’s a ¼ likelihood of heads, ¾ of tails, the whole entropy of this distribution is barely

bits as a substitute of a full bit. This quantifies the uncertainty represented in a likelihood distribution; the result of flipping this bent coin is relatively much less unsure than that of a good coin.

Shannon entropy is a particular case of a whole family of entropy definitions. It characterizes the common data content material in a message (a yes-or-no reply, or extra typically) drawn from a likelihood distribution over doable messages. A extra conservative estimate may use min-entropy, which considers solely the best likelihood factor as a substitute of calculating the arithmetic imply, quantifying the worst-case situation. On this submit, we’ll persist with Shannon entropy. For a extra in-depth dialogue and a nuanced interpretation of the entropist perspective, Paul Syverson’s “Why I’m not an Entropist” is a considerate learn.

Anonymity Intersections

In k-anonymity: a model for protecting privacy, Latanya Sweeney opinions a few of her prior outcomes as motivation — outcomes which demonstrated re-identification of “anonymized” information. Individually, every attribute in an information set related to an entry, comparable to a date of delivery, might sound to disclose little or no in regards to the topic of that entry. However just like the yes-or-no questions from the sport, solely a logarithmic quantity of knowledge is required; in different phrases, mixtures of surprisingly small numbers of attributes will typically be adequate for re-identification:

For instance, a discovering in that examine was that 87% (216 million of 248 million) of the inhabitants in the US had reported traits that possible made them distinctive primarily based solely on {5-digit ZIP, gender, date of delivery}. Clearly, information launched containing such details about these people shouldn’t be thought of nameless.

As a tough estimate, a string of 5 digits would have log₂(10⁵) ≈ 16.6 bits of max entropy, however there are fewer ZIP codes than that, log₂(4.3 x 10⁴) ≈ 15.4 — and take into account that the inhabitants is just not uniformly distributed over ZIP codes, so 13.8 could be a better estimate. A gender discipline would normally include barely greater than 1 bit of knowledge in most circumstances, as a result of even when nonbinary genders are represented, nearly all of entries shall be male or feminine. That mentioned, entries with nonbinary values would reveal much more than 1 bit in regards to the topic of that entry. A date of delivery can be difficult to estimate with out trying on the distribution of ages.

Ignoring February 29 and assuming uniformly distributed birthdays and 2-digit delivery yr, the entropy could be log₂(365 x 10²) ≈ 15.1. Once more, a extra realistic estimate is on the market, 14.9 bits. Taken collectively, the extra conservative estimates complete roughly 29.7 bits. For comparability, the entropy of a uniform distribution over the U.S. inhabitants on the time is log₂(248 x 10⁶) ≈ 27.9 bits, or log₂(342 x 10⁶) ≈ 28.4 with up-to-date figures.

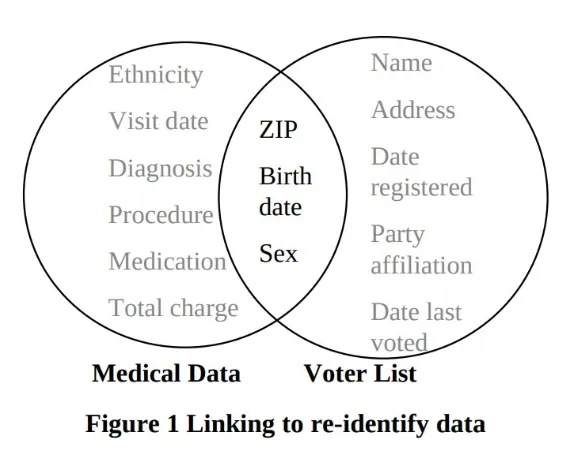

The next diagram from the paper will most likely look acquainted to anybody who has spent a while studying what an “internal be part of” is in SQL. It illustrates a special instance the place Sweeney linked medical data to the voter registration checklist utilizing the identical fields, figuring out then-Massachusetts Governor William Weld’s particular document in an “anonymized” medical dataset:

This type of Venn diagram, with two units represented by two overlapping circles and the overlapping half highlighted, usually represents an intersection between two units. Units are unordered collections of components, comparable to rows in a database, numbers, or the rest that may be mathematically outlined. The intersection of two units is the set of components which are current in each units. So, for instance, throughout the voter registration checklist, we would speak in regards to the subset of all entries whose ZIP code is 12345, and the set of all entries whose delivery date is January 1, 1970. The intersection of those two subsets is the subset of entries whose ZIP code is 12345 and whose date of delivery is January 1, 1970. Within the governor’s case, there was only one entry within the subset of entries whose attribute values matched his attributes within the voter registration checklist.

For information units with completely different buildings, there’s a small complication: If we consider them as units of rows, then their intersection would at all times be empty, as a result of the rows would have completely different shapes. When computing the internal be part of of two database tables, solely the values of columns which are current in each tables are in some sense intersected by specifying one thing like JOIN ON a.zip = b.zip AND a.dob = a.dob, or the much less transportable USING(zip, dob) syntax, however these intersecting values are associated to the rows they got here from, so the general construction of linking two information units is a little more concerned.

Be aware that Sweeney’s diagram depicts the intersection of the columns of the information units, emphasizing the extra main drawback, which is that attributes included within the “anonymized” information set unintentionally had a non-empty intersection with the attributes of different publicly accessible information units.

On the utilized facet of the k-anonymity mannequin, the procedures for anonymizing datasets described within the paper have fallen out of favor attributable to some weaknesses found in subsequent work (“Attacks on Deidentification’s Defenses” by Aloni Cohen). That central thought in k-anonymity is to make sure that for each doable mixture of attributes, there are not less than ok rows containing each particular mixture within the information, which suggests log₂(ok) further bits of knowledge could be wanted to determine an entry from its congruent ones. The deidentification process instructed for guaranteeing this was the case was to redact or generalize in a data-dependent method, for instance, drop the day from a date of delivery, preserving the yr and month, and even solely the yr, if that’s not sufficient. Cohen’s work exhibits how simple it’s to underestimate the brittleness of privateness, as a result of even discarding data till there’s ok of each mixture, the redaction course of itself leaks information in regards to the statistics of the unredacted information set. Such leaks, even when very refined, won’t solely add up over time, however they’ll usually compound. Accounting for privateness loss utilizing bits, that are a logarithmic scale, maybe helps present a greater instinct for the usually exponential charge of decay of privateness.

Anonymity in Bitcoin CoinJoins: Intersection Assaults

Of their paper “When the Cookie Meets the Blockchain: Privacy Risks of Web Payments via Cryptocurrencies,” Steven Goldfeder, Harry Kalodner, Dillon Reisman and Arvind Narayanan describe two unbiased however associated assaults. Maybe extra importantly, in addition they make a really compelling case for the brittleness of privacy extra broadly, by clearly demonstrating how privateness leaks can compound.

In Bitcoin, a pure definition of an anonymity set for a coin is the set of wallet clusters into which the coin might plausibly be merged. The anonymity set is nontrivial if there may be multiple candidate cluster, wherein case merging could be contingent on acquiring further data. New transactions may introduce uncertainty, necessitating the creation of latest clusters for outputs that may’t be merged into any present cluster (but). However, new transactions and out-of-band data can even take away uncertainty and facilitate the merging of clusters. Mostly, if the multi-input heuristic is taken into account legitimate for such a brand new transaction, then the clusters of the enter cash shall be merged. Nonetheless, as we noticed earlier than, many heuristics exist, a few of that are alarmingly correct.

Suppose that Alice acquired some bitcoin right into a pockets beneath her management. Some may need been withdrawn from an alternate (presumably with KYC data). Possibly a pal paid her again for lunch. Possibly she bought her automobile. After making a number of transactions, Alice realizes that her transaction historical past is seen to all and fairly easy to interpret, however quickly she might want to make not only one, however two separate transactions, with stronger privateness assurances than she has been counting on up to now.

After studying a bit about privateness, Alice decides to make use of a pockets that helps CoinJoin. Over a number of CoinJoin transactions, she spends her present cash, acquiring alternative cash that apparently have a non-trivial anonymity set. Earlier than CoinJoining, her pockets was possible clusterable. After CoinJoining, every UTXO she now has can’t be assigned to any particular cluster, since different customers’ pockets clusters are additionally implied within the varied CoinJoin transactions.

The instinct behind CoinJoin privateness is that since a number of inputs belonging to completely different customers are used to create outputs that every one look the identical, nobody output might be linked to a selected enter. That is considerably analogous to a mixnet, the place every CoinJoin transaction is a relay and the “messages” being combined are the cash themselves. This analogy may be very simplistic, there are a lot of problems when implementing CoinJoins that trigger it to interrupt down, however we are going to ignore these nuances on this submit and provides Alice’s chosen CoinJoin pockets the advantage of the doubt and assume that Alice can at all times efficiently spend only one enter into every CoinJoin, and that this leads to good mixing of her funds with these of the opposite events to the CoinJoin. Below these assumptions, if there are ok equal outputs in a CoinJoin transaction, and ok separate clusters for the inputs, then every output’s anonymity set ought to have log₂(ok) bits of entropy when this transaction is created.

Put up-CoinJoin Clustering

The stage is now set for the primary assault described within the paper. This assault was made doable by inclusion of third occasion sources, e.g., a cost processor’s javascript on service provider web sites. Supposing the cost tackle used for the transaction is revealed to the third occasion, that might hyperlink Alice’s internet session to her on-chain transaction. The paper is from 2017, so the specifics of web-related leaks are considerably dated by now, however the precept underlying this concern is as related as ever.

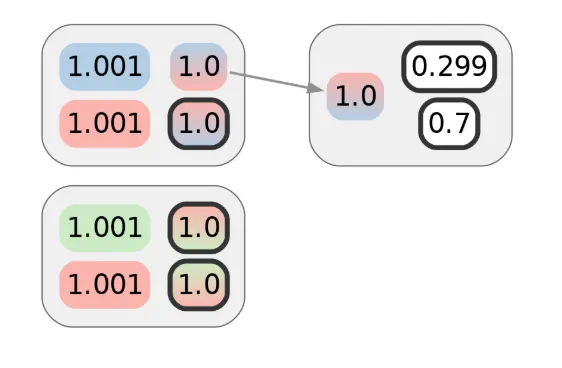

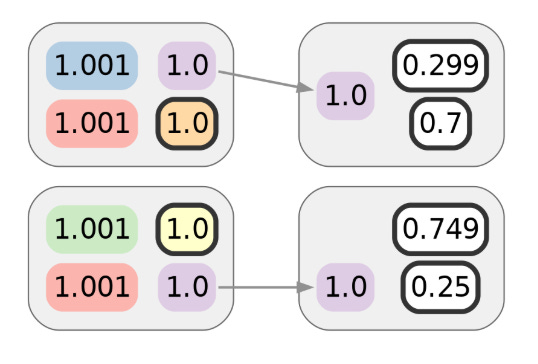

Alice makes use of considered one of her CoinJoin UTXOs to make the primary of these privacy-demanding transactions. Assuming no semantic leaks (comparable to a billing tackle associated to a purchase order) or metadata leaks (maybe she broadcasts using Tor), this transaction ought to protect the privateness Alice obtained from the prior CoinJoin transaction. As drawn right here, that might be 1 bit’s value. The colours of inputs or outputs point out the cluster they’re already assigned to. Alice’s cash are in purple, and gradients symbolize ambiguity:

Whereas the primary transaction doesn’t reveal a lot by itself, suppose Alice makes one other transaction. Let’s say it’s with a special service provider, however one which makes use of the identical cost processor as the primary service provider. Naively, it might seem that the next diagram represents the privateness of Alice’s cost transactions, and that the adversary would wish 2 bits of further data — 1 for every transaction — to attribute them each to Alice’s cluster:

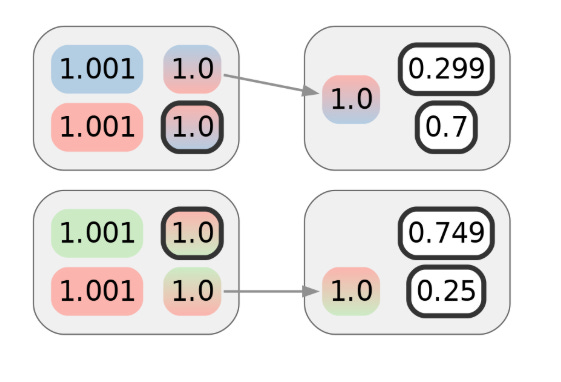

Though Alice intends this to be unlinkable to the primary transaction, she won’t notice her internet searching exercise is being tracked. The paper confirmed that this type of monitoring was not simply doable however even sensible, and may divulge to a 3rd occasion that the 2 transactions might be clustered although they don’t seem associated on-chain. Visually, we are able to symbolize this clustering with further colours:

Internet monitoring, as mentioned within the paper, is only one of some ways data that facilitates clustering can leak. For instance, web site breaches may end up in buy data being made public, even years after the very fact. In not less than one example, authorized proceedings, that are supposed to guard victims, ended up exposing them to much more hurt by needlessly revealing details about the on-chain transactions of shoppers by way of improper redaction of the transacted quantities. The earlier submit on the historical past of pockets clustering gives a number of further examples.

Particularly within the context of CoinJoins, a typical method that this type of linkage might happen is when the change outputs of post-mix cost transactions are subsequently CoinJoined in a fashion that causes them to be linkable by clustering the inputs. That is often known as the poisonous change drawback, which is illustrated within the subsequent diagram. Be aware that white doesn’t symbolize a single cluster, simply lack of clustering data on this instance.

If the coordinator of the supposedly “trustless” CoinJoin protocols is malicious, then even attempting to CoinJoin might hyperlink the transactions, even when this doesn’t turn into self-evident on-chain. The implications are the identical because the assault described within the paper, besides {that a} CoinJoin coordinator can even faux that some members didn’t submit their signatures in time, actively however covertly, or not less than deniably disrupting rounds to acquire extra clustering data.

Intersection Antecessor Clusters

Sadly for Alice, the story doesn’t finish there. What the paper confirmed subsequent was that given such linking of post-CoinJoin transactions, no matter how this clustering was realized, an intersection assault on the privateness of the CoinJoin transactions themselves additionally turns into doable.

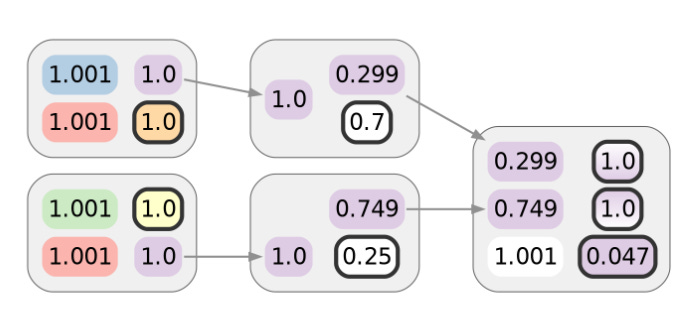

It’s as if the adversary is enjoying “Guess Who?” and is given a cost transaction, then tries to guess the place the funds originated from. Take into account the set of inputs for every CoinJoin transaction. Each one of many spent cash is assigned to some cluster. Each one of many CoinJoin transactions Alice participated in has an enter that’s linkable to considered one of her clusters. The privateness of such transactions derives from being linked to numerous in any other case unrelated clusters. Armed with information that post-CoinJoin transactions hyperlink a number of CoinJoin outputs collectively, the adversary can compute the intersection of the units of related clusters. How typically will it’s the case {that a} random particular person consumer participated in each transaction that Alice did? What about multiple? Not fairly often. And suppose the intersection comprises a novel cluster, which might typically ultimately be the case. In that case, the adversary will have the ability to hyperlink Alice’s transactions to one another and her pre-CoinJoin transaction historical past, successfully undoing the combination.

Visually, this combines the inferences of earlier diagrams. For every coin within the purple cluster of the final two diagrams, we are able to intersect the units of colours within the gradients depicted within the diagram earlier than that:

Solely Alice’s purple cluster is within the intersection, in order that the purple cluster might be merged into the purple one. Not solely do Alice’s clusters merge, since this instance solely has two consumer CoinJoin transactions, the remaining clusters may also be merged with their ancestors by technique of elimination, so Alice’s linkable funds would additionally doubtlessly deanonymize a hypothetical Bob and Carol on this specific case:

This implies that even when CoinJoins functioned like an ideal combine (which they don’t), inadequate post-mix transaction privateness can moreover undermine the privateness of the prior CoinJoin transactions, and way more quickly than appears intuitive. The graph construction, which connects Bitcoin transactions, comprises a wealth of knowledge accessible to a deanonymization adversary.

Privateness issues are sometimes downplayed, perhaps due to defeatist attitudes in mild of the challenges of stopping and even controlling privateness leaks. Hopefully consciousness will enhance, and issues will play out like they did in cryptography in earlier many years — whether or not it’s now not transport weak “export” crypto, or how timing side channels had been largely ignored at first, however at the moment are broadly understood to be virtually exploitable and implementations that don’t take them into consideration are thought of insecure. That mentioned, it’ll at all times be more difficult: In cryptography, now we have extra alternatives to restrict the hurt of unintended publicity by preferring ephemeral keys over long-term ones, or not less than rotating long-term keys periodically. Sadly, the closest analog of rotating keys I can consider in privateness is witness safety applications — a reasonably excessive and dear measure, and much from completely efficient.

For privateness in the true world, the challenges of CoinJoin privateness stays.

That is an edited model of the article by @not_nothingmuch, posted on Spiral’s Substack June 11.

BM Big Reads are weekly, in-depth articles on some present subject related to Bitcoin and Bitcoiners. If you will have a submission you suppose matches the mannequin, be happy to achieve out at editor[at]bitcoinmagazine.com.